|

3/21/2023 0 Comments Sql server json compare

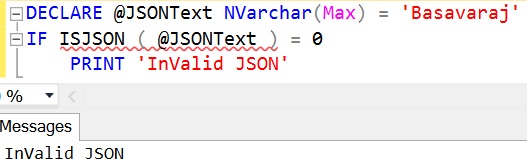

JSON_VALUE allows you to extract a scalar value (think numbers, strings values, etc.) from an existing JSON column. That’s when you use JSON_VALUE and JSON_QUERY. With JSON’s infinite possibilities, you need mechanisms to access the data appropriately, and Your JSON data can have fields that are scalar values,Īrrays, or nested objects. What about querying your JSON or retrieving the data from the text column? While JSON is typically structured, it isn’tĪ rigid structure like you’d expect from a relational table. In MSSQL, you store JSON as nvarchar(max) or some variation of nvarchar, but storage is only half of the JSON story. In this post, you’ll see how to modify Entity Framework Core to support SQL Server’s JSON_VALUE and JSON_QUERY in NET Developers also utilize Entity Framework Core to access their database, allowing the library to generate SQL and JSON also lets you be more flexibleĪbout what you store, allowing you to store dynamic data that isn’t easy with relational database schemas. Typically a combination of identifier, sorting columns, and then a JSON column. Information as JSON can help reduce interaction complexity between your application and SQL, with storage objects One of MSSQL’s hidden gems is its ability to allow you to store and query JSON data in existing tables. As a result, there are few surprises when choosing MSSQL, but don’t Option for developers and database administrators. S SQL Server has long been a reliable choice with Microsoft support and a large tooling ecosystem, making it a safe The engine’s popularity is because Microsoft’ NET ecosystem, Microsoft’s SQL Server (MSSQL) andĪzure flavor are still likely the most popular choice for. And check again that recovery model is set to simple.While other database providers have made their place known within the. I have preallocated space in the log file.

May I start thinking to store them as file? In my scenario json usually born small and then increase day by day till the size I am testing.

What I am expecting to achieve is an update time <0,5s I have tested again a different cart (a little smaller one) here times (client statistics) and execution plan.įor the insert statement I have used the data generated by SQL Server (task -> script table -> data only) (it's a development only environment and in this case I am the only working with no other program or scheduled activity running.) 41 with 4GB reserved RAM, 16 core licensed. If it's a better idea I can also use a stored procedure to upload the big text.Ī Windows Server 2019 16 logical processor 2,1 GHz and Microsoft SQL Server Standard (64-bit) version. The document is a big JSON that will not exceed the 10MB of data (I have done the benchmark reported above in the worst scenario) and I have no issue into converting it to a BLOB or other data structures if performance improve. It really surprise me that it's that slow to load a text into SQL Server, so what I am asking is if there is a better way to achieve that. SET = this command on the same machine of the DB (a development environment so no other work load) I got this performance: Here an example of an update statement DECLARE AS INT = ĭECLARE AS NVARCHAR(MAX) = '.document.' -~8MB )WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, OPTIMIZE_FOR_SEQUENTIAL_KEY = OFF) ON (max) NOT NULL,ĬONSTRAINT PRIMARY KEY CLUSTERED I am experiencing poor performance on a single row insert/update statement over a table when a nvarchar(max) column has few MB of data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed